Agent to Agent Testing Platform vs Prompt Builder

Side-by-side comparison to help you choose the right AI tool.

Agent to Agent Testing Platform

Revolutionize AI agent performance with our platform that tests chat, voice, and multimodal interactions for bias and.

Last updated: February 28, 2026

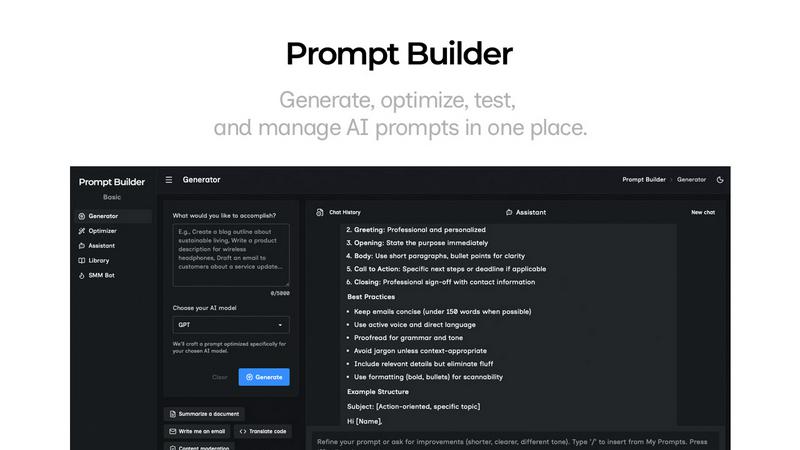

Prompt Builder

Prompt Builder instantly crafts and refines AI prompts for any model, turning ideas into perfect results in seconds.

Last updated: April 13, 2026

Visual Comparison

Agent to Agent Testing Platform

Prompt Builder

Feature Comparison

Agent to Agent Testing Platform

Automated Scenario Generation

This feature enables the creation of diverse test cases automatically, simulating a wide array of interactions for AI agents, including chat, voice, and hybrid scenarios. This ensures that agents are thoroughly tested across various contexts and user interactions.

True Multi-Modal Understanding

The platform allows users to define detailed requirements or upload Product Requirement Documents (PRDs) encompassing various input types, such as text, images, audio, and video. This capability ensures that the AI agent under test can accurately respond to complex, real-world scenarios.

Diverse Persona Testing

By leveraging a range of personas, the platform simulates different end-user behaviors, needs, and interactions. This ensures that AI agents can effectively cater to various user types, from international callers to digital novices, enhancing their performance across audiences.

Regression Testing with Risk Scoring

The platform offers comprehensive end-to-end regression testing, providing insights into risk scoring. This feature identifies potential areas of concern, allowing teams to prioritize critical issues and optimize testing strategies for maximum impact.

Prompt Builder

Model-Optimized Prompt Generator

This is the genesis engine of your prompt workflow. Describe your objective in plain English and select your target AI model (e.g., Gemini 1.5 Pro, Claude 3 Opus, GPT-4). The Generator instantly crafts a structurally tuned prompt that aligns with the specific nuances and optimal formatting of that model. It moves beyond one-size-fits-all templates, providing a solid, context-aware draft that serves as the perfect starting point for refinement, drastically reducing initial setup time and improving first-attempt accuracy.

Integrated Chat Assistant & Workspace

Prompt Builder features a fully integrated, multi-model chat environment for seamless testing and refinement. You can run your generated or library prompts directly within the platform using an assistant model of your choice (Grok, DeepSeek, etc.), eliminating disruptive context-switching between tools. This chat-first workspace preserves your entire iteration history, allowing you to pin breakthrough follow-ups and save the most effective conversational threads alongside the original prompt for perfect reproducibility.

Intelligent Prompt Optimizer

The Optimizer acts as your AI-powered prompt surgeon. Paste any existing prompt—whether from your library, a previous project, or an external source—and the Optimizer deconstructs and enhances it. It applies structured improvements for clarity, adds precise constraints, refines output formats, and suggests relevant examples. This feature transforms vague or underperforming prompts into robust, instruction-following powerhouses, with all optimization history auto-saved for review.

Centralized Prompt Library & Community Hub

This feature provides a dynamic, searchable repository for all your prompt assets. Your personal library allows you to categorize, pin, edit, and instantly deploy any saved prompt. Beyond your own work, you gain access to a curated vault of Community Prompts—pre-built, high-performing templates from other users. This turns prompt engineering from a solitary task into a collaborative, knowledge-sharing ecosystem, enabling you to discover and adapt proven solutions for countless use cases.

Use Cases

Agent to Agent Testing Platform

Quality Assurance for Chatbots

Enterprises can utilize the platform to rigorously test chatbots before deployment, ensuring they perform accurately and effectively in real-world conversations while adhering to compliance standards and user expectations.

Voice Assistant Evaluation

The platform is ideal for validating voice assistants, allowing organizations to assess their performance in diverse acoustic conditions and interactions, ensuring they deliver a seamless user experience.

Phone Caller Agent Testing

By simulating realistic phone interactions, businesses can evaluate the effectiveness and reliability of their AI-powered phone caller agents, ensuring they handle customer inquiries with professionalism and empathy.

Continuous Performance Monitoring

With autonomous testing capabilities, organizations can continuously monitor AI agents post-deployment, ensuring they maintain high performance levels and adapt to evolving user needs and scenarios.

Prompt Builder

Accelerated Content Creation Pipeline

Content teams and solo creators can leverage Prompt Builder as a force multiplier. Use the SMM Bot to generate platform-tailored social posts (X threads, LinkedIn articles, TikTok scripts) from a single brief, then refine tone and messaging in chat. Save winning content frameworks to the Library to maintain brand voice consistency across all channels, transforming a creative idea into publish-ready copy in a fraction of the traditional time.

Developer-First AI Agent Prototyping

Developers building on AI APIs can use Prompt Builder as a rapid prototyping sandbox. Generate and test multiple prompt variations tuned for different models (Claude for reasoning, GPT for code) to find the most reliable and cost-effective configuration for an agent's task. The Optimizer helps harden prompts against edge cases, and the Library serves as a version-controlled knowledge base for different agent functions, streamlining the development cycle.

Enterprise Knowledge Workflow Standardization

Organizations can deploy Prompt Builder to codify and scale best practices. Teams can build a shared Library of approved, optimized prompts for common tasks like customer email drafting, report analysis, or internal documentation. This ensures every employee uses the most effective, vetted prompts, leading to consistent output quality, reduced token waste on retries, and measurable gains in operational efficiency and AI ROI.

AI Research & Comparative Model Analysis

Researchers and AI enthusiasts utilize the platform for rigorous, side-by-side model testing. The ability to generate a single intent into multiple model-tuned prompts and run them concurrently in Assistant allows for direct comparison of outputs from Gemini, Claude, Llama, and others. This facilitates deep analysis of model strengths, weaknesses, and response characteristics, all while maintaining an organized record of every experiment in the chat history and Library.

Overview

About Agent to Agent Testing Platform

Agent to Agent Testing Platform is a groundbreaking AI-native quality assurance framework designed specifically for validating the behavior of AI agents in real-world scenarios. As autonomous AI systems become increasingly prevalent and unpredictable, traditional quality assurance (QA) models that were developed for static software are no longer sufficient. This revolutionary platform transcends basic prompt-level evaluations by assessing full, multi-turn conversations across diverse modalities, including chat, voice, and phone interactions. It empowers enterprises to rigorously validate AI agents before they are deployed in production environments. The platform incorporates a specialized assurance layer that facilitates multi-agent test generation using over 17 unique AI agents. These agents are engineered to uncover long-tail failures, edge cases, and complex interaction patterns often overlooked by manual testing. With autonomous synthetic user testing capabilities, the platform can simulate thousands of realistic interactions at scale, ensuring robust performance checks across critical metrics such as bias, toxicity, and hallucination.

About Prompt Builder

Prompt Builder is the definitive prompt engineering operating system, engineered to dismantle the friction between human intent and machine output. It transcends being a simple tool; it's a comprehensive, chat-native workspace designed for the iterative creation, testing, and systematic management of AI prompts. At its core, Prompt Builder solves the critical inefficiency of manually rewriting prompts for different large language models (LLMs). You simply describe your task in natural language, select your target model—be it GPT, Claude, Gemini, Llama, or any other leading AI—and the platform generates a model-optimized, professional-grade prompt draft. This foundational prompt is then refined through an integrated chat interface, allowing for rapid iteration. Every successful version can be saved, pinned, and organized into a personal or team library, creating a living repository of high-performance prompts. Built for developers, content creators, marketers, and AI power users, Prompt Builder's revolutionary value proposition is turning hours of prompt-crafting guesswork into a streamlined workflow of seconds, ensuring consistent, high-quality outputs and maximizing the return on every AI interaction.

Frequently Asked Questions

Agent to Agent Testing Platform FAQ

What types of AI agents can be tested using the platform?

The Agent to Agent Testing Platform supports a wide range of AI agents, including chatbots, voice assistants, and phone caller agents, across various testing scenarios.

How does the platform ensure comprehensive testing?

The platform employs automated scenario generation and diverse persona testing to create extensive test cases that simulate real-world interactions, ensuring comprehensive evaluation of AI agent performance.

Can the platform integrate with existing CI/CD pipelines?

Yes, the Agent to Agent Testing Platform seamlessly integrates with existing CI/CD frameworks, facilitating streamlined test orchestration and quick feedback loops.

What metrics can be evaluated during testing?

Key metrics include bias, toxicity, hallucination, effectiveness, accuracy, empathy, and professionalism, allowing for a thorough assessment of AI agent behavior in diverse scenarios.

Prompt Builder FAQ

Which AI models does Prompt Builder support?

Prompt Builder is architected for model agnosticism and supports a vast and growing ecosystem of large language models. This includes industry leaders like OpenAI's GPT series, Anthropic's Claude, Google's Gemini, Meta's Llama, Mistral AI's models, xAI's Grok, DeepSeek, Perplexity, and Cohere. The platform continuously updates to integrate new and updated models, ensuring your prompt engineering workflow remains future-proof.

How does the "model-optimized" prompt generation work?

The system utilizes advanced heuristics and learned structural patterns specific to each supported LLM. When you select a target model, the Generator tailors the prompt's architecture, instruction phrasing, constraint placement, and output formatting to align with how that particular model has been trained to process information most effectively. This eliminates the guesswork, providing a prompt engineered for higher fidelity and fewer hallucinations from the first interaction.

What is the difference between the Generator, Optimizer, and Assistant?

These are the three core, interconnected modules of the Prompt Builder workflow. The Generator creates a new prompt from a natural language idea. The Optimizer enhances and refines an existing prompt you provide. The Assistant is the execution and testing environment where you run prompts (from the Generator, Optimizer, or Library) in a live chat with a chosen AI model to see outputs and iterate. Think of it as Create (Generator), Enhance (Optimizer), and Test/Execute (Assistant).

Can I collaborate with my team on prompts in the Library?

Yes, Prompt Builder is designed for collaborative intelligence. Team plans allow you to create shared workspaces and libraries where prompts can be co-created, refined, and organized. Team members can search, run, and pin from the collective repository, ensuring everyone uses the latest, most effective versions. This transforms individual prompt mastery into a scalable, institutional asset, driving uniform quality and efficiency across projects.

Alternatives

Agent to Agent Testing Platform Alternatives

The Agent to Agent Testing Platform is an innovative AI-native quality assurance framework designed specifically to validate the behavior of AI agents across various communication modalities, including chat, voice, and phone. As enterprises increasingly adopt autonomous AI systems, the limitations of traditional QA models become evident, prompting users to seek alternatives that better accommodate their evolving needs. Common reasons for exploring alternatives include pricing constraints, specific feature requirements, and the need for compatibility with existing platforms. When selecting an alternative to the Agent to Agent Testing Platform, users should prioritize solutions that offer robust multi-agent testing capabilities, comprehensive coverage of interaction scenarios, and a focus on security and compliance. Additionally, evaluating the scalability of the platform and its ability to simulate real-world interactions can significantly impact the effectiveness of the chosen solution in ensuring quality and assurance in AI behavior.

Prompt Builder Alternatives

Prompt Builder is a next-generation prompt engineering workspace, a category-defining tool within the AI assistant ecosystem. It transforms raw ideas into optimized, model-specific AI prompts through an intuitive, chat-driven interface. This platform consolidates the entire prompt lifecycle—generation, testing, optimization, and management—into a single, cohesive environment. Users explore alternatives for various strategic reasons. Some seek different pricing models or free tiers, while others require specific integrations or platform exclusivity. Feature depth, such as advanced analytics, team collaboration tools, or support for niche AI models, also drives the search for other solutions. When evaluating other platforms, consider core engineering capabilities. Look for robust testing suites, version control for prompts, and broad model compatibility. The ideal alternative should accelerate your workflow, not complicate it, offering a seamless bridge between human intent and machine execution.