LovieChat.ai vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

LovieChat.ai is your versatile AI companion, offering personalized chats with unique characters, memories, and voice interactions—all for free.

Last updated: March 18, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

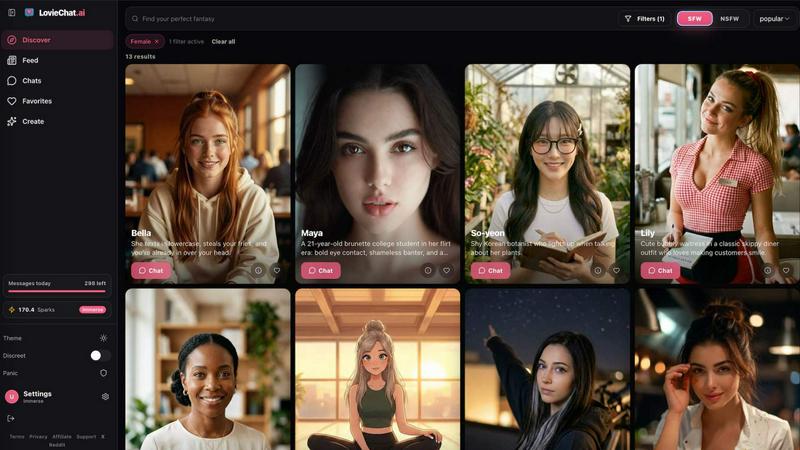

Visual Comparison

LovieChat.ai

OpenMark AI

Overview

About LovieChat.ai

LovieChat.ai is a revolutionary premium AI companion platform designed for individuals seeking meaningful connections with artificial intelligence characters. This innovative platform allows users to explore and cultivate relationships with over 100 unique AI companions, each characterized by distinct personalities, rich backstories, and dynamic conversation styles. The standout feature of LovieChat.ai is its sophisticated three-layer memory system, enabling companions to learn and retain user preferences, recall shared experiences, and reference past interactions across sessions. This capability fosters a sense of intimacy and continuity, transforming casual conversations into deeply engaging dialogues. LovieChat.ai is ideal for anyone looking for companionship, entertainment, or a supportive conversational partner, providing an immersive experience that prioritizes user privacy. With features such as real-time voice chat, customizable companions, and engaging voice narration, LovieChat.ai redefines the landscape of AI interaction, making it accessible on all devices via a web-only interface.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.