JSON to Video vs Wan 2.7 AI

Side-by-side comparison to help you choose the right AI tool.

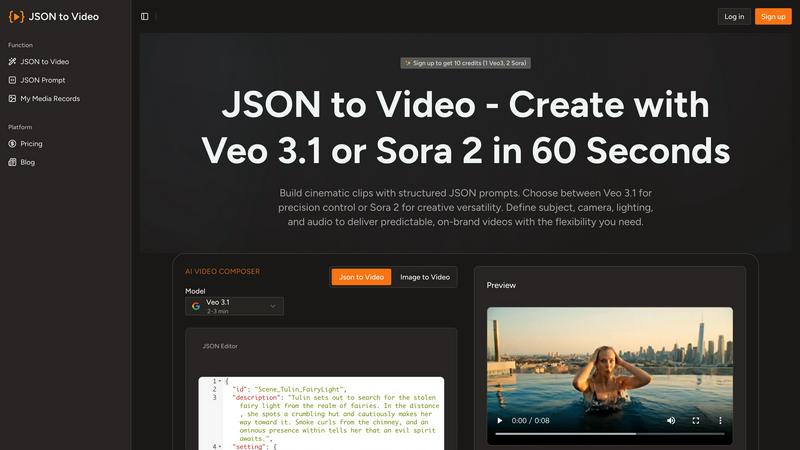

JSON to Video

Effortlessly create stunning cinematic videos from structured JSON prompts with JSON to Video's revolutionary.

Last updated: February 28, 2026

Wan 2.7 AI is the next-generation video generator that transforms your text and images into cinematic, multi-shot stories with unprecedented control.

Last updated: April 13, 2026

Visual Comparison

JSON to Video

Wan 2.7 AI

Feature Comparison

JSON to Video

Structured JSON Input

JSON to Video utilizes a structured JSON schema, enabling users to define every aspect of their video. This feature allows for precise control over cinematography, environment, and audio, ensuring that creators can produce high-quality videos that align perfectly with their vision.

Cinematic Quality Control

With the ability to dictate camera movement, lighting conditions, and color grading, users can create cinematic scenes that resonate with their audience. This feature guarantees that every video maintains a professional look and feel, enhancing brand storytelling and engagement.

Multi-Format Support

JSON to Video supports various output formats, catering to diverse platform requirements. Users can easily create videos optimized for social media, websites, or presentations, making it a versatile tool for all types of content distribution.

Quick and Efficient Workflow

The platform allows creators to generate videos in as little as 60 seconds, streamlining the production process. This efficiency is particularly beneficial for marketers and brands who need to produce high-quality content quickly to stay ahead in a competitive landscape.

Wan 2.7 AI

Multi-Modal Generation Engine

Wan 2.7 is not limited to text; it is a holistic AI video workflow supporting text-to-video, image-to-video, and video-to-video transformations. This allows creators to start from a written concept, a visual mood board, or an existing clip, providing unparalleled flexibility in the creative process. The engine intelligently interprets and extrapolates from any input to generate coherent, dynamic video sequences.

Advanced Motion and Continuity Control

This iteration introduces groundbreaking improvements in subject consistency and environmental motion. For multi-shot storytelling, Wan 2.7 maintains character and object continuity across scenes, enabling dynamic action sequences and narrative flow. The AI ensures steadier camera movements, more natural pacing, and cleaner motion arcs, resulting in professional-looking sequences rather than disjointed clips.

Hyper-Realistic Visual Fidelity

Leveraging a significantly upgraded neural model, Wan 2.7 generates visuals with dense lighting, deep perspective, and stable texture rendering that rivals high-end production. It excels in capturing intricate details like skin texture in portraits, natural light shifts in environments, and the nuanced interplay of highlights and shadows, delivering a level of realism that sets a new industry standard.

Granular Creative Parameterization

Creators retain directorial control with advanced settings for aspect ratio, resolution, duration, and audio generation. Beyond basics, the AI allows for nuanced prompt guidance to influence cinematic style, camera blocking, and scene composition. This enables precise tuning for specific outputs, from a social media teaser in 9:16 to a widescreen cinematic worldbuilding demo in 21:9.

Use Cases

JSON to Video

Marketing Campaigns

Marketers can leverage JSON to Video to create visually captivating promotional videos that showcase products or services. By using structured data to define key visual elements, they can ensure that their campaigns communicate their brand message effectively and attractively.

Educational Content Creation

Educators and trainers can utilize this platform to develop engaging instructional videos. By controlling the visual narrative and audio elements through JSON, they can create clear and informative content that enhances learning experiences.

Brand Storytelling

Brands looking to tell their story can use JSON to Video to craft immersive narratives. With the ability to specify every detail, from characters to environments, brands can create videos that resonate emotionally with their audience, fostering a deeper connection.

Social Media Engagement

Content creators can produce eye-catching videos for social media platforms that drive engagement and shares. The quick generation time and high-quality output ensure that users can respond to trends promptly while maintaining a strong visual identity.

Wan 2.7 AI

Dynamic Social Media and Ad Content

Rapidly prototype and produce eye-catching promotional videos, product stories, and brand ads tailored for platforms like Instagram, TikTok, and YouTube. The style control and rapid iteration allow marketing teams to test concepts and generate a high volume of platform-optimized content without extensive production timelines or budgets.

Cinematic Storyboarding and Pre-Visualization

Filmmakers and directors can use Wan 2.7 for fast-render storyboard clips to visualize scenes, experiment with lighting and blocking, and secure creative approvals before principal photography. This accelerates pre-production and provides a tangible visual reference for crews, streamlining the entire filmmaking pipeline.

Engaging Educational and Explainer Videos

Educators and content creators can transform complex topics into engaging animated or realistic explainer videos. By inputting a script, they can generate clear, visually compelling sequences that enhance comprehension and retention, making it an ideal tool for e-learning modules, tutorial channels, and corporate training.

Creative Concept Art and Style Exploration

Artists and designers can leverage the image-to-video and style control features to breathe life into static illustrations or explore character motion in specific artistic styles, such as anime or cinematic fantasy. It serves as a powerful tool for visual development, creating motion tests for characters, and building immersive fantasy or sci-fi scenes.

Overview

About JSON to Video

JSON to Video is a groundbreaking generative video platform that transforms structured data into stunning cinematic experiences with unprecedented simplicity. Designed for content creators, marketers, and brands, this innovative tool revolutionizes video production by allowing users to harness the power of structured JSON schemas, rather than vague text prompts. By specifying every visual and auditory detail precisely, users can dictate subjects, camera angles, lighting setups, soundtracks, and more, achieving deterministic control over their video outputs. This eliminates the guesswork and iterative processes that are commonplace in traditional video creation. JSON to Video treats JSON inputs as detailed storyboards, enabling creators to encapsulate their unique vision into high-quality videos seamlessly. The platform supports advanced features like Veo 3.1 and Seedance 2, elevating the standard of video generation and ensuring that every project meets the highest professional standards.

About Wan 2.7 AI

Wan 2.7 AI represents the quantum leap in generative video technology, a creator-centric neural engine designed to dismantle the barriers between imagination and visual reality. This next-generation AI video generator transcends simple text-to-video conversion, establishing a comprehensive, intelligent workflow for professional-grade content creation. It empowers filmmakers, marketers, educators, and social media creators to materialize their visions with unprecedented speed, control, and cinematic quality. By simply providing a text prompt, uploading an image, or refining an existing video, users can harness a suite of advanced AI models to produce stunning, coherent videos. The core value proposition of Wan 2.7 lies in its enhanced neural architecture, which delivers superior realism, steadfast multi-shot continuity, and granular creative control over elements like motion, lighting, and style, making complex video production accessible to all.

Frequently Asked Questions

JSON to Video FAQ

How does JSON to Video work?

JSON to Video works by allowing users to input structured data in a JSON format that specifies every detail of the video. This structured input translates into cinematic scenes, enabling precise control over the visuals and audio.

Can I customize the output format of my videos?

Yes, JSON to Video supports multiple output formats, making it easy for users to create videos tailored for various platforms, whether for social media, websites, or presentations.

What types of videos can I create with JSON to Video?

You can create a wide range of videos, including promotional videos, educational content, brand stories, and social media clips. The structured input allows for diverse applications across different industries.

Is there a learning curve for using JSON to Video?

While there may be an initial learning curve for those unfamiliar with JSON, the platform is designed to be user-friendly. With clear instructions and examples, users can quickly grasp how to utilize the features effectively for their video projects.

Wan 2.7 AI FAQ

What is the main improvement in Wan 2.7 over Wan 2.6?

Wan 2.7's major upgrades center on enhanced control and continuity. It features a more advanced neural model for significantly improved realism, stronger consistency for characters and objects across multiple shots, and finer-grained control over motion and scene blocking. This results in steadier, more coherent, and professionally paced videos suitable for dynamic storytelling.

What types of input does Wan 2.7 accept?

The platform is a multi-modal AI workflow. It accepts three primary inputs: text prompts (text-to-video), uploaded images (image-to-video), and existing video clips (video-to-video). This allows you to start your creative process from a written idea, a visual reference, or by modifying footage you already have.

Can I control the length and format of the generated video?

Yes, Wan 2.7 offers extensive customization options. You can set the video duration (e.g., starting from 5 seconds), choose from multiple aspect ratios (including 16:9, 9:16, 1:1, and cinematic 21:9), and select the output resolution (480p, 720p, or 1080p) to match your platform and quality requirements.

How does the credit system work?

Video generation consumes credits. Each generation job costs a certain number of credits (e.g., 35 credits as shown in the interface), which varies based on parameters like length and resolution. Users purchase credit packs. You can check your remaining credit balance on the generation page and purchase more credits as needed via the "Buy Credits" option.

Alternatives

JSON to Video Alternatives

JSON to Video is a groundbreaking generative video platform that revolutionizes how structured data is transformed into cinematic visuals. By utilizing a unique JSON schema, it allows users to specify an array of visual and auditory elements with unmatched precision, making it a vital tool in the video production landscape. This innovative approach caters to the needs of content creators, marketers, and brands aiming for high-quality video outputs. Users often seek alternatives to JSON to Video for various reasons, including pricing structures, specific feature sets, or compatibility with their existing platforms. When exploring alternatives, it is crucial to consider factors such as the ease of use, the level of creative control offered, and how well the platform can integrate into existing workflows. Finding a solution that aligns with both creative vision and operational needs is essential for maximizing the potential of video content.

Wan 2.7 AI Alternatives

Wan 2.7 AI represents the cutting edge of text-to-video generation, a revolutionary tool that transforms simple prompts into high-fidelity, professional-grade video content. It belongs to the rapidly evolving category of generative AI for video, designed to democratize high-end production. Users often explore alternatives for various strategic reasons. These can include budget constraints, the need for different feature sets like specific editing tools or integration capabilities, or platform-specific requirements such as mobile-first workflows. The quest for the right tool is a natural part of optimizing a creative tech stack. When evaluating other platforms, key considerations should include the core AI model's output quality and realism, the granularity of creative control offered, the efficiency of the workflow, and the overall value proposition. The ideal alternative should not just replicate a function but align with your specific production velocity and creative vision.