LLMWise vs Prompt Builder

Side-by-side comparison to help you choose the right AI tool.

LLMWise revolutionizes AI access with one API to seamlessly compare, blend, and pay only for the best models per use.

Last updated: February 28, 2026

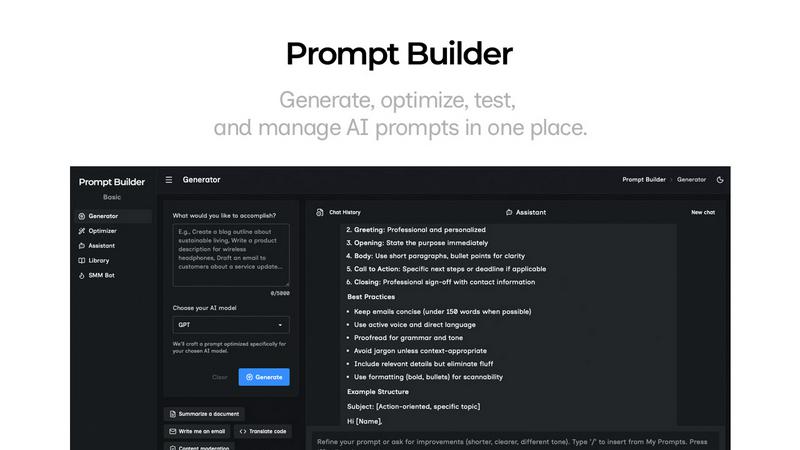

Prompt Builder

Prompt Builder instantly crafts and refines AI prompts for any model, turning ideas into perfect results in seconds.

Last updated: April 13, 2026

Visual Comparison

LLMWise

Prompt Builder

Feature Comparison

LLMWise

Smart Routing

Smart routing is a game-changing feature that intelligently directs prompts to the most suitable model. Whether it is coding queries sent to GPT, creative writing tasks assigned to Claude, or translation requests handled by Gemini, the system ensures optimal performance by matching tasks with the best-suited AI capabilities.

Compare & Blend

With the compare and blend functionality, users can run prompts simultaneously across various models, allowing them to evaluate responses side-by-side. The blend feature synthesizes the best parts of different outputs into a single, cohesive answer, significantly enhancing the quality and relevance of the information provided.

Always Resilient

LLMWise is built with resilience in mind, featuring a circuit-breaker failover system that reroutes requests to backup models when a primary provider experiences downtime. This ensures that applications remain operational and reliable at all times, preventing disruptions caused by external factors.

Test & Optimize

The test and optimize capabilities include benchmarking suites, batch tests, and optimization policies aimed at enhancing speed, cost-effectiveness, and reliability. Automated regression checks also help maintain high standards in output quality, allowing developers to continuously refine and improve their applications.

Prompt Builder

Model-Optimized Prompt Generator

This is the genesis engine of your prompt workflow. Describe your objective in plain English and select your target AI model (e.g., Gemini 1.5 Pro, Claude 3 Opus, GPT-4). The Generator instantly crafts a structurally tuned prompt that aligns with the specific nuances and optimal formatting of that model. It moves beyond one-size-fits-all templates, providing a solid, context-aware draft that serves as the perfect starting point for refinement, drastically reducing initial setup time and improving first-attempt accuracy.

Integrated Chat Assistant & Workspace

Prompt Builder features a fully integrated, multi-model chat environment for seamless testing and refinement. You can run your generated or library prompts directly within the platform using an assistant model of your choice (Grok, DeepSeek, etc.), eliminating disruptive context-switching between tools. This chat-first workspace preserves your entire iteration history, allowing you to pin breakthrough follow-ups and save the most effective conversational threads alongside the original prompt for perfect reproducibility.

Intelligent Prompt Optimizer

The Optimizer acts as your AI-powered prompt surgeon. Paste any existing prompt—whether from your library, a previous project, or an external source—and the Optimizer deconstructs and enhances it. It applies structured improvements for clarity, adds precise constraints, refines output formats, and suggests relevant examples. This feature transforms vague or underperforming prompts into robust, instruction-following powerhouses, with all optimization history auto-saved for review.

Centralized Prompt Library & Community Hub

This feature provides a dynamic, searchable repository for all your prompt assets. Your personal library allows you to categorize, pin, edit, and instantly deploy any saved prompt. Beyond your own work, you gain access to a curated vault of Community Prompts—pre-built, high-performing templates from other users. This turns prompt engineering from a solitary task into a collaborative, knowledge-sharing ecosystem, enabling you to discover and adapt proven solutions for countless use cases.

Use Cases

LLMWise

Rapid Prototyping

LLMWise enables developers to prototype quickly by providing access to 30 free models that can be tested without incurring costs. This allows teams to experiment and iterate on ideas swiftly, fostering innovation and creativity in their AI-driven projects.

Cost Management

By consolidating multiple AI models under one API, LLMWise helps organizations save on costs associated with multiple subscriptions. Developers can pay only for what they use, thereby optimizing their budget while still leveraging top-tier AI capabilities.

Enhanced Debugging

Developers can utilize the compare mode to run the same prompt across various models, instantly identifying which one performs best for specific edge cases. This feature significantly reduces debugging time and enhances the accuracy of AI-generated responses.

Dynamic Content Creation

Content creators can harness LLMWise's blend mode to generate high-quality articles, marketing materials, or creative writing. By combining insights from multiple models, users can produce richer and more nuanced content that resonates with their audience.

Prompt Builder

Accelerated Content Creation Pipeline

Content teams and solo creators can leverage Prompt Builder as a force multiplier. Use the SMM Bot to generate platform-tailored social posts (X threads, LinkedIn articles, TikTok scripts) from a single brief, then refine tone and messaging in chat. Save winning content frameworks to the Library to maintain brand voice consistency across all channels, transforming a creative idea into publish-ready copy in a fraction of the traditional time.

Developer-First AI Agent Prototyping

Developers building on AI APIs can use Prompt Builder as a rapid prototyping sandbox. Generate and test multiple prompt variations tuned for different models (Claude for reasoning, GPT for code) to find the most reliable and cost-effective configuration for an agent's task. The Optimizer helps harden prompts against edge cases, and the Library serves as a version-controlled knowledge base for different agent functions, streamlining the development cycle.

Enterprise Knowledge Workflow Standardization

Organizations can deploy Prompt Builder to codify and scale best practices. Teams can build a shared Library of approved, optimized prompts for common tasks like customer email drafting, report analysis, or internal documentation. This ensures every employee uses the most effective, vetted prompts, leading to consistent output quality, reduced token waste on retries, and measurable gains in operational efficiency and AI ROI.

AI Research & Comparative Model Analysis

Researchers and AI enthusiasts utilize the platform for rigorous, side-by-side model testing. The ability to generate a single intent into multiple model-tuned prompts and run them concurrently in Assistant allows for direct comparison of outputs from Gemini, Claude, Llama, and others. This facilitates deep analysis of model strengths, weaknesses, and response characteristics, all while maintaining an organized record of every experiment in the chat history and Library.

Overview

About LLMWise

LLMWise is a revolutionary AI tool that simplifies the complexity of managing multiple language model providers. Designed for developers and teams seeking the best AI capabilities for diverse tasks, LLMWise consolidates access to the most advanced large language models (LLMs) in one unified API. With LLMWise, users can seamlessly utilize models from industry giants like OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek without the hassle of juggling multiple subscriptions. The intelligent routing feature ensures that each prompt is automatically matched to the optimal model based on task requirements. This not only enhances efficiency but also enables users to compare, blend, and optimize responses, ensuring they always receive the highest quality output. LLMWise empowers developers to focus on innovation and results rather than on the complexities of model management.

About Prompt Builder

Prompt Builder is the definitive prompt engineering operating system, engineered to dismantle the friction between human intent and machine output. It transcends being a simple tool; it's a comprehensive, chat-native workspace designed for the iterative creation, testing, and systematic management of AI prompts. At its core, Prompt Builder solves the critical inefficiency of manually rewriting prompts for different large language models (LLMs). You simply describe your task in natural language, select your target model—be it GPT, Claude, Gemini, Llama, or any other leading AI—and the platform generates a model-optimized, professional-grade prompt draft. This foundational prompt is then refined through an integrated chat interface, allowing for rapid iteration. Every successful version can be saved, pinned, and organized into a personal or team library, creating a living repository of high-performance prompts. Built for developers, content creators, marketers, and AI power users, Prompt Builder's revolutionary value proposition is turning hours of prompt-crafting guesswork into a streamlined workflow of seconds, ensuring consistent, high-quality outputs and maximizing the return on every AI interaction.

Frequently Asked Questions

LLMWise FAQ

How does LLMWise ensure optimal model selection?

LLMWise employs intelligent routing that automatically matches prompts with the most appropriate model based on the task at hand, ensuring optimal performance and quality.

Can I use my existing API keys with LLMWise?

Yes, LLMWise supports Bring Your Own Keys (BYOK), allowing users to integrate their existing API keys for models, which can help reduce costs and maintain flexibility.

Is there a subscription fee for using LLMWise?

No, LLMWise operates on a pay-as-you-go model. Users can start for free and only pay for the credits they consume, eliminating the need for recurring subscription fees.

What happens if a model provider goes down?

LLMWise features a circuit-breaker failover system that automatically reroutes requests to backup models, ensuring that your applications continue to function smoothly without interruptions.

Prompt Builder FAQ

Which AI models does Prompt Builder support?

Prompt Builder is architected for model agnosticism and supports a vast and growing ecosystem of large language models. This includes industry leaders like OpenAI's GPT series, Anthropic's Claude, Google's Gemini, Meta's Llama, Mistral AI's models, xAI's Grok, DeepSeek, Perplexity, and Cohere. The platform continuously updates to integrate new and updated models, ensuring your prompt engineering workflow remains future-proof.

How does the "model-optimized" prompt generation work?

The system utilizes advanced heuristics and learned structural patterns specific to each supported LLM. When you select a target model, the Generator tailors the prompt's architecture, instruction phrasing, constraint placement, and output formatting to align with how that particular model has been trained to process information most effectively. This eliminates the guesswork, providing a prompt engineered for higher fidelity and fewer hallucinations from the first interaction.

What is the difference between the Generator, Optimizer, and Assistant?

These are the three core, interconnected modules of the Prompt Builder workflow. The Generator creates a new prompt from a natural language idea. The Optimizer enhances and refines an existing prompt you provide. The Assistant is the execution and testing environment where you run prompts (from the Generator, Optimizer, or Library) in a live chat with a chosen AI model to see outputs and iterate. Think of it as Create (Generator), Enhance (Optimizer), and Test/Execute (Assistant).

Can I collaborate with my team on prompts in the Library?

Yes, Prompt Builder is designed for collaborative intelligence. Team plans allow you to create shared workspaces and libraries where prompts can be co-created, refined, and organized. Team members can search, run, and pin from the collective repository, ensuring everyone uses the latest, most effective versions. This transforms individual prompt mastery into a scalable, institutional asset, driving uniform quality and efficiency across projects.

Alternatives

LLMWise Alternatives

LLMWise is an advanced API platform that consolidates access to major language models such as GPT, Claude, and Gemini, among others. It belongs to the AI Assistants category, empowering developers to utilize the best-suited model for each task without the hassle of managing multiple AI providers. Users often seek alternatives due to various reasons, including pricing structures, feature sets, and specific platform requirements that may cater better to their unique needs. When exploring alternatives, it is essential to consider factors like the flexibility of payment options, the range of models available, and the capability for intelligent routing to ensure optimal performance. Additionally, users should evaluate the platform's resilience, testing and optimization features, and the ease of integration with existing systems to make a well-informed decision.

Prompt Builder Alternatives

Prompt Builder is a next-generation prompt engineering workspace, a category-defining tool within the AI assistant ecosystem. It transforms raw ideas into optimized, model-specific AI prompts through an intuitive, chat-driven interface. This platform consolidates the entire prompt lifecycle—generation, testing, optimization, and management—into a single, cohesive environment. Users explore alternatives for various strategic reasons. Some seek different pricing models or free tiers, while others require specific integrations or platform exclusivity. Feature depth, such as advanced analytics, team collaboration tools, or support for niche AI models, also drives the search for other solutions. When evaluating other platforms, consider core engineering capabilities. Look for robust testing suites, version control for prompts, and broad model compatibility. The ideal alternative should accelerate your workflow, not complicate it, offering a seamless bridge between human intent and machine execution.