Agenta

Agenta is the open-source LLMOps platform that transforms AI development with centralized collaboration and robust.

About Agenta

Agenta is the revolutionary open-source LLMOps platform that serves as the foundational operating system for the era of intelligent applications. Engineered for dynamic AI development, Agenta transforms the chaotic landscape of building large language model applications into a structured, high-velocity science. It is meticulously designed for pioneering AI teams, including developers, product managers, and domain experts, who are committed to delivering reliable, production-grade LLM applications that transcend mere prototypes. By addressing the inherent unpredictability of large language models, Agenta eliminates friction caused by disparate communication silos, ineffective testing methods, and opaque debugging processes. With Agenta, teams gain a single source of truth for the entire LLM lifecycle, enabling them to experiment with precision, evaluate with evidence, and observe with clarity. This platform empowers collaboration, fosters innovation, and establishes a paradigm shift towards structured, evidence-based LLMOps.

Features of Agenta

Centralized Prompt Management

Agenta offers a centralized platform where prompts, evaluations, and traces are stored and managed, streamlining workflows for the entire team. This feature eliminates the chaos of scattered documentation and ensures that all team members have access to the same resources, enhancing collaboration and minimizing misunderstandings.

Automated Evaluation

Agenta replaces guesswork with a systematic approach to running experiments and tracking results. Automated evaluation allows teams to validate changes based on real evidence, fostering a culture of data-driven decision-making. This feature supports integration with various evaluators, ensuring flexibility and adaptability to different development needs.

Unified Playground

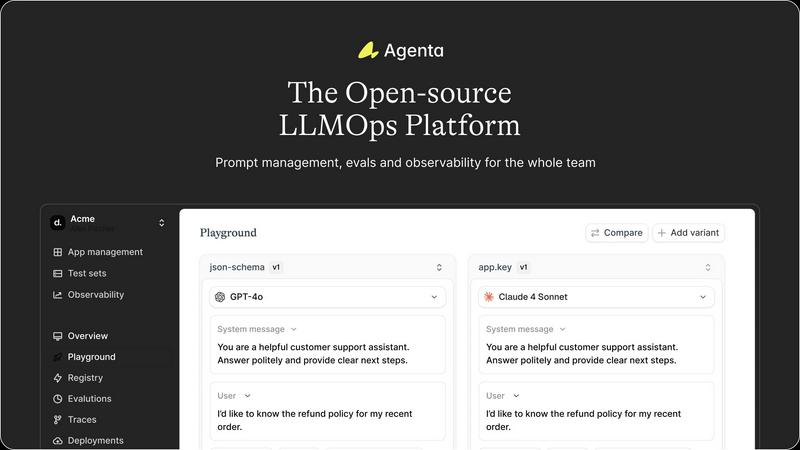

The unified playground feature allows teams to compare prompts and models side-by-side, facilitating quick iterations and improvements. It includes a complete version history, enabling teams to track changes over time and providing the ability to test different models without being locked into a single provider.

Trace Annotation and Debugging

Agenta enables teams to trace every request and identify exact failure points in their AI systems. With the ability to annotate traces collaboratively, teams can gather feedback from both users and experts. This feature closes the feedback loop by allowing any trace to be turned into a test with a single click, significantly enhancing debugging efficiency.

Use Cases of Agenta

Rapid Prototyping of AI Applications

Agenta is ideal for teams looking to rapidly prototype AI applications. By centralizing workflows and providing tools for evaluation and collaboration, developers can quickly iterate on prompts and models, significantly speeding up the development cycle.

Performance Monitoring and Improvement

With Agenta's robust observability features, teams can monitor the performance of their AI applications in real-time. This capability allows for immediate detection of regressions and performance issues, enabling teams to respond quickly and maintain high reliability in production environments.

Collaborative Development Across Teams

Agenta fosters collaboration among product managers, developers, and domain experts by creating a unified workflow. This ensures that all stakeholders can contribute to the development process, enhancing the quality of LLM applications through diverse insights and expertise.

Evidence-Based Decision Making

Agenta empowers teams to replace intuition with evidence in their decision-making processes. By utilizing automated evaluations and comprehensive performance tracking, teams can make informed choices that lead to better outcomes and more reliable AI applications.

Frequently Asked Questions

What is LLMOps?

LLMOps refers to the operational practices and tools used in the development and management of large language models. It encompasses processes for experimentation, evaluation, deployment, and monitoring of AI applications.

How does Agenta help in debugging AI systems?

Agenta provides detailed tracing of requests and allows for collaborative annotation of those traces. This enables teams to identify failure points accurately and turn any trace into a test, significantly streamlining the debugging process.

Is Agenta suitable for teams new to AI development?

Absolutely. Agenta is designed for both seasoned AI teams and those just starting out. Its user-friendly interface and comprehensive documentation make it accessible for teams at any stage of their AI development journey.

Can Agenta integrate with existing tech stacks?

Yes, Agenta seamlessly integrates with various frameworks and models, including LangChain and OpenAI. This flexibility allows teams to incorporate Agenta into their existing workflows without disruption.

Explore more in this category:

Similar to Agenta

Game Server Backend

Game Server Backend revolutionizes multiplayer gaming by integrating player auth, data management, and server hosting into a single powerful API.

Headless Domains

Headless Domains empowers AI agents with portable, verifiable identities for seamless trust and transactions across digital platforms.

LoadTester

LoadTester revolutionizes performance engineering by orchestrating hyper-scalable HTTP and API load tests with zero infrastructure from your browser.

ProcessSpy

ProcessSpy revolutionizes macOS process monitoring with advanced features for real-time insights, ensuring seamless performance and deep system.